Weeping soldiers, allegations of desertion – how AI videos on social media are distorting public perceptions about the Ukraine war

AI-generated content showing emotionally broken Ukrainian soldiers in tears declaring their intention to desert is spreading on social networks. Some of the videos have clocked up millions of views and seem to be specifically tailored to be part of a deliberate campaign of disinformation – even if their technical shortcomings are undeniable. A GADMO investigation by the German-language fact-checking team of Agence France-Presse (AFP) shows how this content has played out internationally, which narratives about the Ukraine war it is intended to convey, and how long AI tools have been systematically used for such purposes.

An emotional new propaganda campaign, created with the help of artificial intelligence (AI), has flooded social media since November 2025. The battle for the strategically key logistics hub of Pokrovsk in eastern Ukraine is intensifying and Russian troops are laying siege to the city, but pro-Russian circles online have already long declared Moscow’s victory: Dozens of hyperrealistic, AI-generated images and videos depict alleged Ukrainian soldiers laying down arms, or in tears as they relate how they were press-ganged into combat. The footage feeds into an atmosphere of doom and despair: soldiers in Ukrainian uniforms beg not to be sent to the front or describe how Ukrainian forces are fleeing their destroyed positions.

The content is spreading rapidly on platforms such as TikTok and YouTube, often clocking up millions of views, both at home and internationally. While the alleged soldiers in the videos often speak Russian, subtitles in other languages ensure that the content reaches audiences worldwide. AFP found it on Instagram, Telegram, Facebook and X in a number of languages, ranging from Greek to Polish. It was also picked up in printed media in Russian and Serbian.

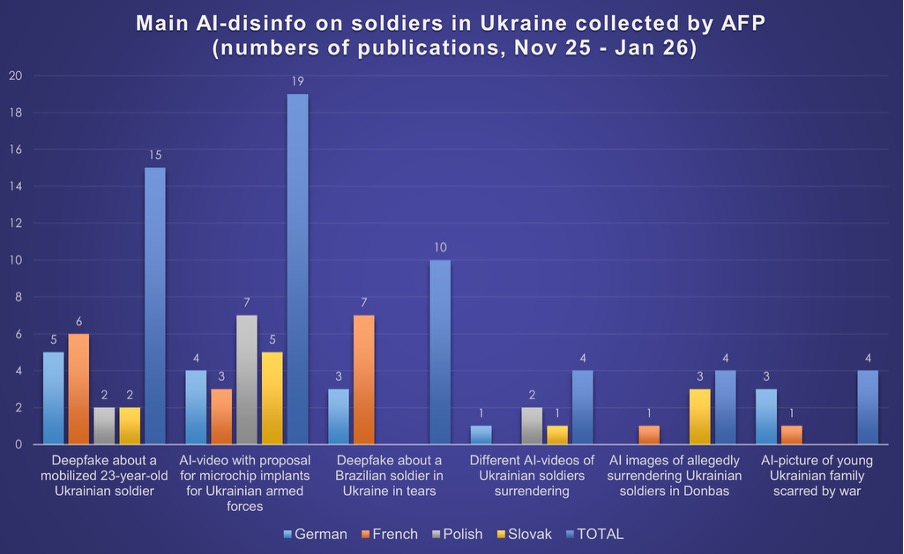

In this investigation for the German-Austrian Digital Media Observatory (GADMO), the AFP Fact Check team looked at more than 70 accounts sharing such AI-generated videos and images in German, French, Polish and Slovak. AFP Fact Check analysed the strategy behind the publication of such manipulated content and examined how it is used to undermine trust in reliable information.

Faulty images and stolen faces: the technical shortcomings of AI propaganda

In a 10-second video clip, we see lines of soldiers in uniform bearing the blue and yellow colours of Ukraine in muddy fields and grey skies. A look of desperation can be seen in their faces; they weep and hold their hands to their heads in a sign of surrender.

However, on closer analysis, such footage – observed by AFP since early November 2025 – display technical flaws typical of generative AI. Among the signs that the video has been manipulated are irregular body shapes or errors in image blending. In one section, a seemingly headless soldier can be seen jumping into the air in the background.

In another widely shared video analysed by AFP, an alleged soldier from Pokrovsk walks easily despite having a leg in plaster, while limbs disappear or stretchers seem to float in the background. Such inconsistencies remain characteristic of generative-AI video, although they are becoming increasingly difficult to detect immediately.

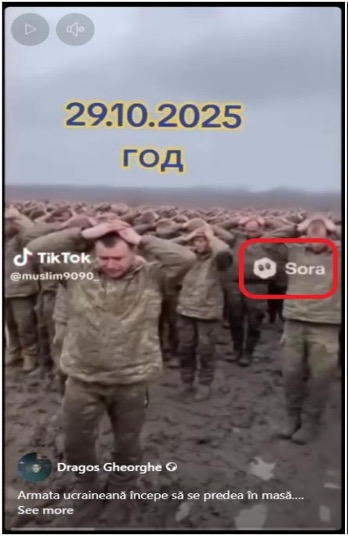

Most of these alleged mobile phone footages from the front often appear to be produced using Sora 2, OpenAI’s advanced video generator. Tools like Google’s Nano Banana Pro are also used to generate such images. According to Alice Lee, an expert on Russian influence at NewsGuard, these “text-to-image AI tools are all too happy to comply in the propagation of false claims” due to low security barriers, as the organisation’s research in the past has shown. Lee pointed out that users could spread disinformation more easily via the widely used format. “False claims being spread in short-form video demand far less of the viewer and their attention span, and are perfect for a TikTok feed (as opposed to, say, a falsified three-minute news report, which would likely be too long to gain traction on TikTok),” she told AFP.

Since autumn 2025, Sora 2 has allowed users to convert simple text prompts into short, highly detailed videos with natural-looking movements, where previous models still ran up against their limits. Although not officially launched in Europe, many users gain access via technical workarounds. Some videos analysed by AFP still display the Sora logo; in others it seems to have been subsequently removed. Although OpenAI has implemented security measures such as watermarks and content filters, these are often circumvented in practice. Watermarks are overlaid with blocks of text or removed using free online tools. Around the time the videos spread, AFP found numerous tutorials – especially in Russian – that offer detailed operating instructions: These instructions, distributed on platforms such as YouTube, explain step-by-step how to remove the watermark of the AI generator Sora 2 or the tricks needed to get around content filters and avoid deletion by platform operators.

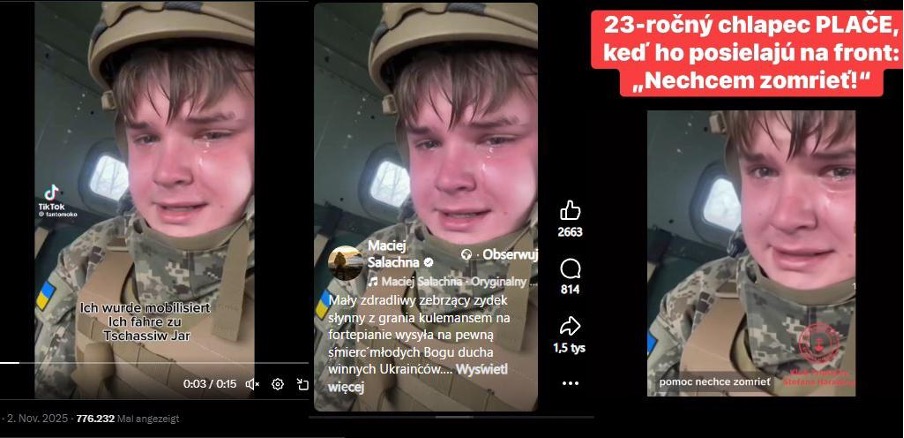

In addition to purely technically generated content, creators also used the faces of real people in their videos. A crying soldier in a widely shared video strongly resembles a Russian content creator operating online under the pseudonym “Kussia88”. Behind this pseudonym is a young Russian streamer called Vladimir Yuryevich Ivanov from St. Petersburg. He reacted to the speculation on his Telegram channel without confirming or clearly denying any direct involvement. The Ukrainian Center for Combating Disinformation (CCD) confirmed in a statement that the face in the clip undoubtedly belongs to a Russian and not a Ukrainian citizen. The trail of the manipulated clip led AFP to a TikTok account “@fantomoko” that has subsequently been deleted. There, the video was one of dozens of other AI-produced content all following the same formula: They showed weeping men in Ukrainian uniforms, apparently in military vehicles. In a number of sequences, the official watermark of the Sora 2 video generator was clearly visible – a clear sign of the technological source of the false information. Another of those affected was exiled Russian YouTuber Alexei Gubanov, whose face appeared in a fake video of a crying Ukrainian soldier. “Clearly, that’s not me,” he clarified in a YouTube video. “Unfortunately, many people believe it is, and it plays directly into the hands of Russian propaganda.”

“Cognitive warfare” targets morale, experts say

The flood of AI-generated content is about much more than merely generating clicks, experts say. It constitutes a form of strategic communication, much of which can be attributed to pro-Russian actors. The aim is to weaken international support for Ukraine by portraying a demoralised army. According to Lee at NewsGuard, any number of narratives are pursued – ranging from mass surrenders and the forced recruitment of underage soldiers to reports of corrupt officials who shut down power supplies for the population. The videos are frequently closely linked to the news cycle, for example during the battles for Pokrovsk. In addition, “they specifically target Ukraine’s mobilisation practices – a key pillar of pro-Kremlin false claims since the beginning of the war,” Lee said.

Ever since Russia invaded Ukraine in February 2022, Kyiv has faced the urgent challenge of mobilising additional soldiers. In April 2024, the age for conscription into Ukrainian military service was lowered from 27 to 25 years. At the end of June 2025, Ukrainian President Volodymyr Zelensky signed a law according to which people over 60 years of age can also be accepted into the army. The government in Kyiv has already taken numerous measures to this end, including one-year contracts with relatively high bonuses and financial incentives for 18- to 24-year-olds.

Christopher Nehring, an expert and researcher on disinformation and cybersecurity, classifies the phenomenon of this AI-generated content as classic “cognitive warfare” – a NATO technical term, as he explained to AFP. ” “It’s about influencing the will to defend and resist in Ukraine, but also about framing the narrative for the Russian audience.” The core message is always the same: Ukraine is portrayed as weak, while Russia is portrayed as strong. The fact that these fakes have been increasing in recent months is no coincidence,” said Nehring with a view to complicated peace negotiations: “AI is a simple and efficient tool here to transform emotions and messages into images and gestures.”

Experts say they are seeing a “new type” of disinformation. For author and social media expert Ingrid Brodnig, the videos combine AI with a form of “hyper-emotionality”. Whether they show weeping soldiers or desperate deserters, “the whole thing is not real, but it is intended to make an impression on the emotional level,” Brodnig told AFP. “From the beginning, Russia was keen to give the impression that Ukraine had no chance. These videos now fit in remarkably well.” And this strategy is effective. Certain emotions such as anger, fear or contempt massively increase the likelihood that content will be shared. For Brodnig, the suspicion was that Russian narratives were deliberately being used. “The picture is created that it would have been a mistake for Ukraine to defend itself against Russia’s war of aggression.”

From a socio-psychological perspective, the aim of disseminating these videos is to systematically wear the enemy down. According to Roland Imhoff, Professor of Social and Legal Psychology at Johannes Gutenberg University Mainz, the calculation behind the videos is to undermine morale—a well-known propaganda goal that is now gaining new power through AI. The targeted dissemination of images of exhaustion and remorse is intended to give the country and its international supporters “perhaps the final push” toward de facto surrender, Imhoff told AFP.

The machinery of disinformation: From TikTok to GRU-linked networks

TikTok told AFP in November 2025 that accounts that appeared to be behind such videos had been deleted – even if some had accumulated more than 300,000 “likes” and several million views. OpenAI said it had held an investigation but provided no further details.

Although some companies have shown a willingness to combat the misuse of their tools, “the scale and impact of information warfare outweigh the ability of companies to respond”, Pablo Maristany de las Casas, an analyst at the Institute for Strategic Dialogue (ISD), told AFP in November 2025.

Moreover, the exact origin of such content is often difficult to determine beyond doubt, as the creators “carefully erase” their digital tracks, Maristany de las Casas told AFP in January 2026 regarding the AI-generated videos of Ukrainian soldiers. The profiles that share this content are primarily “pro-Russian or benevolent accounts across different languages and geographies”. The activities would “not necessarily show signs of coordinated activity,” the expert said..

However, similarities in content suggest that the disseminated content nevertheless follows a certain pattern, as NewsGuard analyst Lee observed. “We wouldn’t exclude malign actors or coordinated behaviour, especially as the videos are often remarkably similar to each other across different platforms and languages, and are clearly intended to discredit the Ukrainian army.” However, there is no clear evidence of this. Since most videos originate from anonymous TikTok and YouTube accounts, which are deleted quite quickly, it is difficult to obtain data about their origin. However, she pointed out that TikTok and YouTube are accessible in Russia through the VPN that is widely used in the country. However, the use of these platforms strongly suggests that the videos are intended for an audience outside Russia.

Specific cases analysed by AFP illustrate the doubts surrounding the authenticity of supposed sources. The username of a TikTok account with the handle @muslim9090_, which has since been deleted, can be seen on AI-generated content of deserting Ukrainian soldiers investigated by AFP. The account bore the display name “Orc from Kharkov” – where the name is written in Russian and uses the Russian name for the city of Kharkiv (Kharkov instead of Ukrainian Kharkiv). “Orc” is also a derogatory term for Russian soldiers that is common in Ukraine. The profile design thus suggests a pro-Russian actor directly from the embattled Ukrainian city of Kharkiv. However, given the Ukrainian authorities’ rigorous search for saboteurs, it would be extremely risky to operate such a conspicuous social media profile in a city close to the front, as Anna Malpas, AFP reporter on disinformation, explained. Rather, the name – “Muslim” is a common first name in the North Caucasus – points to a digital staging that is merely intended to simulate local authenticity.

Such videos were also published in Russian on YouTube and Facebook and in some cases clicked on millions of times. AFP also identified such clips in numerous Russian-language Telegram channels, including the account “Rezident”. The name suggests a person operating as an agent “under cover”, while the abbreviation “ua” in the handle feigns Ukrainian origin. The channel regularly claims to provide exclusive insider information from Ukrainian government circles, Malpas said. It consistently spreads pessimistic forecasts about the course of the war and the situation in Ukraine. As early as 2022, the Ukrainian security service SBU placed the “Rezident” channel on a list of untrustworthy sources, classifying it as a “pseudo-Ukrainian Telegram channel” acting in Russia’s interests. This assessment coincides with a January 2026 report by the analysis firm OpenMinds, which assigns “Rezident” to a specific category of actors: pseudo-Ukrainian channels, often launched long before the full-scale invasion to strategically promote Russian narratives within Ukraine. According to the report, this channel – along with accounts such as “Legitimniy” or “Ze_Kartel” – belongs to a group reportedly linked to the 85th centre of the Russian intelligence service GRU. Many of these channels share identical anti-Ukrainian content or are run by sanctioned former Ukrainian politicians wanted for collaboration with Russia. OpenMinds’ analysis also shows that these channels have hardly any connections to real Ukrainian Telegram clusters, but are deeply embedded in the Russian information space. Today, they function less as a tool to influence Ukrainian users and more as a “simulated Ukrainian opposition” that gives the Russian audience a distorted picture of the situation.

The use of generative AI is not a one-sided phenomenon. AI content is also increasingly being used on the Ukrainian side or in pro-Ukrainian networks to support their own narrative. On TikTok channels, for example, there are clips showing supposed Russian soldiers complaining about poor equipment or questioning the usefulness of their deployment. Many of these recordings contain elements that indicate creation with the help of AI. The statements of Russian soldiers often seem very striking and it is doubtful that Russian soldiers would publicly express such criticism in this form. The boundaries between authentic front material and digital staging are thus becoming increasingly blurred on both sides of the information front.

Propaganda at the push of a button: “AI Slop” is becoming a threat to political reality

Manipulation by AI is not a new development, but has long since developed into a strategic tool. What often begins as a harmless gimmick or clickbait is increasingly being used systematically to distort political realities – especially in the slipstream of major diplomatic events.

Recent data from the fact-checking teams of the European Digital Media Observatory (EDMO), the European fact-checking network to which GADMO belongs, show that the topics of migration and Ukraine are the most affected topics in December 2025, each accounting for nine percent of all fact-checks examined. Of a total of 1,605 articles analysed, 146 alone dealt with disinformation about Ukraine.

The use of AI in fake news reached a new historic high in December last year: 16 percent of all cases investigated – a total of 252 articles – used the technology. This even exceeded the record level of 14 percent achieved as recently as November 2025. On the one hand, the high click-through rate of emotional content on social media platforms makes it easy to earn money, as stated in the EDMO report from January 2026. On the other hand, the technology serves to further polarise public debate, from which extremist forces in particular benefit. Organised campaigns such as Russian war propaganda in particular are increasingly relying on AI, as it allows convincing fakes to be produced in large numbers quickly and cost-effectively.

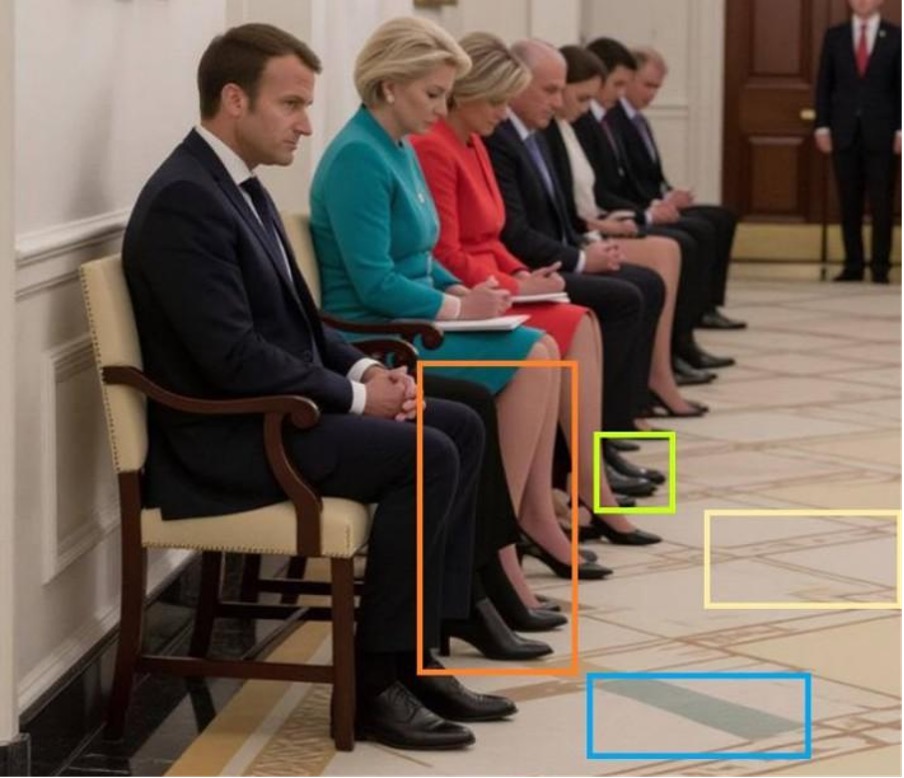

This type of manipulation was already actively used in the summer of 2025. While political decisions of global significance were being made, AI fakes flooded the web to distort the public perception of events. An AFP investigation from last summer made clear how massively this so-called “AI slop” floods the media space: A particularly clear example of this dynamic was the staged humiliation of diplomacy around the Ukraine summit in Washington in August 2025. An AI-generated image depicting European leaders such as Friedrich Merz and Emmanuel Macron as submissively waiting supplicants in front of the Oval Office was shared thousands of times. Although the footage contained clear technical errors, pro-Russian channels instrumentalised the material to discredit the EU delegation as a powerless “coalition of the waiting”.

This competition for attention was further fuelled by bizarre, satirical clips—such as depictions of Donald Trump and Vladimir Putin dancing together. Such content floods social networks and competes directly with fact-based reporting, which it increasingly threatens to supplant due to its enormous reach. According to the organisation NewsGuard, these publications follow a systematic pattern: pro-Kremlin sources deliberately use high-profile diplomatic meetings to undermine confidence in Western alliances through AI fakes.

Incomplete content moderation on platforms such as TikTok, X, and Meta only helps to facilitate the rapid spread of the disinformation. Since viral posts often generate financial gains for their creators, a veritable market has developed for them. Ultimately, this leads to an increasingly distorted public perception of political crises. Lee from NewsGuard also explained this in relation to the spread of AI-generated videos of deserting soldiers. She said that state actors were not always behind the spread. “It’s also possible that individual users are trying to monetise TikTok videos.” Since this content often generates high traffic numbers, it can be financially lucrative for the creators.

Why pro-Russian propaganda is increasingly relying on invisible actors

In any case, the machinery behind disinformation has long since become professionalised and is using increasingly subtle methods to cover its tracks in the digital space. The methods used by pro-Russian actors are constantly evolving in order to circumvent fact-checking and systematically blur the line between truth and fiction. According to an AFP analysis from late November 2025, disinformation actors are increasingly relying on strategies that make it almost impossible to identify the originators. To avoid lawsuits from real people or rapid deletions, deepfakes now prefer to use AI-generated faces or people from commercial image databases. One example of this is an alleged “personal shopper” of Olena Zelenska, who spreads claims about the Ukrainian First Lady’s alleged luxury spending in a video – but her face comes from an archive database for advertising photos.

At the same time, simpler but effective concealment tactics are gaining importance. In many videos, faces are completely pixelated or the protagonists appear masked while making explosive claims as for example about alleged arms sales by Ukraine to Mexican cartels. Since identification is not possible, verification of these statements is extremely difficult.

Era in which “AI poisons AI”

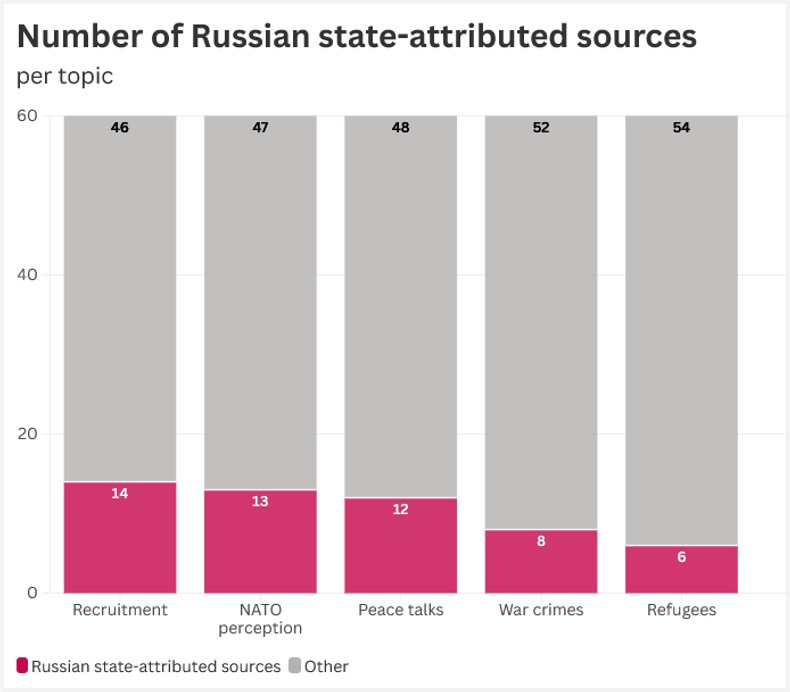

A particularly far-reaching tactic also targets the functioning of modern AI directly. By publishing false claims en masse in numerous languages and on a multitude of dubious websites, attempts are being made to manipulate the algorithms of chatbots such as ChatGPT or Gemini. Since these language models are based on probabilities, they are more likely to accept false narratives as established facts when the internet is systematically “flooded” with these statements. In this context, experts warn of an era in which “AI poisons AI” as bots incorporate disinformation into their training data. AI chatbots are thus also being used specifically to spread pro-Kremlin narratives. A study published in October 2025 by ISD as part of a project funded by the European Media and Information Fund (EMIF) with AFP showed that among the chatbots tested, “almost a fifth of the responses cited sources attributable to the Russian state”.

At the same time, disinformation actors are increasingly turning to platforms where content moderation is less stringent, as AFP has analysed.These include video games and youth platforms such as Minecraft and World of Tanks. In Minecraft, for example, maps have been created that recreate Russian conquests in Ukraine, such as in Bakhmut and Mariupol. The target audience here is primarily young people, who are often less aware of political manipulation in a playful environment. This is complemented by the infiltration of private WhatsApp groups, where conspiracy myths can be spread far from public scrutiny. This constant adaptation of tactics resembles a cat-and-mouse game in which the actors deliberately search for ever new gaps in the security mechanisms.

Networked expertise against the flood of disinformation

The findings of this AFP investigation do not stand alone, but are part of a larger picture. Other German-speaking organisations in the GADMO network have observed similar developments and exposed numerous AI-generated content about the war in Ukraine as fake.

The fact-checking team at the German Press Agency (dpa) has been documenting the increased use of AI videos in this context since October 2025: in addition to bizarre clips, such as a video of a Russian soldier with a wild boar near Kupjansk, dpa also examined a video of a crying Ukrainian soldier, which turned out to be AI-generated. The Austria Press Agency (APA) addressed such soldier videos in November 2025 and drew parallels with AFP to similar AI content in other conflicts, such as in the Gaza Strip. The fact-checking team at the Correctiv research network has also demonstrated in a series of fact checks how diverse AI manipulations are in the context of Russia’s war of aggression in Ukraine.

The acute danger posed by AI disinformation in the context of diplomatic tensions is demonstrated not least by recent cases ranging from renowned editorial offices to the highest political circles. While in the case of the deposed Venezuelan head of state Nicolás Maduro, AI images of his capture even found their way into the reporting of quality media outlets, the White House recently actively disseminated manipulated content itself – specifically, an edited photo of a protester arrested in Minnesota. In such an environment, close cooperation in networks such as GADMO is more necessary than ever to document the extent of manipulation and counter disinformation with facts.

Author: Katharina Zwins / AFP

Copyrights: All rights reserved. Any use of AFP content on this website is subject to the terms of use available on https://www.afp.com/en/useful-links/terms-use. By accessing and/or using any AFP content available on this website, you agree to be bound by the forementioned terms of use. Any use of AFP content is under your sole and full responsibility.